Suspect in Molotov attack on Sam Altman is a eugenicist who believes AI will end the world

Daniel "Butlerian Jihadist" Moreno-Gama had written about "Ethical Eugenics" and "AI Existential Risk" prior to the terrorist attack

ChatGPT creator OpenAI was founded in 2015 as a non-profit AI lab with the mission of researching how to create AI with human-level capabilities. The concept of AI that is truly capable of thought and reasoning is commonly referred to as artificial general intelligence (AGI).

Many AI researchers who believe AGI is close worry that it could transform into artificial superintelligence (ASI), develop a negative opinion of humanity, and pose an "existential risk" to us if it decides to use its advanced intelligence in service of human extermination.

There is no evidence that this scenario is anything more than a science fiction fantasy.

Nevertheless, this hasn't stopped many people to worry about the theoretical risks posed by ASI and specifically, the risks posed by OpenAI and its CEO Sam Altman's attempts at creating AGI/ASI using funding from for-profit investors like Microsoft. These ongoing concerns led to the creation of rival AI lab Anthropic in 2021 and in 2023, Sam Altman was briefly ousted from his position as CEO of OpenAI before being reinstated a few days later. I wrote an essay aiming to explain the internal corporate dynamics of the situation called "WTF is going with AI". My argument was that the discord within OpenAI represented a sort of schism between the optimist Altman and more cautious factions within the company over the company's approach to safety.

Last weekend, this escalated to a new level of seriousness when a molotov cocktail was thrown at Sam Altman's home. The situation is still developing, but based on the information we have now, it seems like the motivation for the attack was simply a continuation of the cult-like infighting that previously led to Altman's temporary firing.

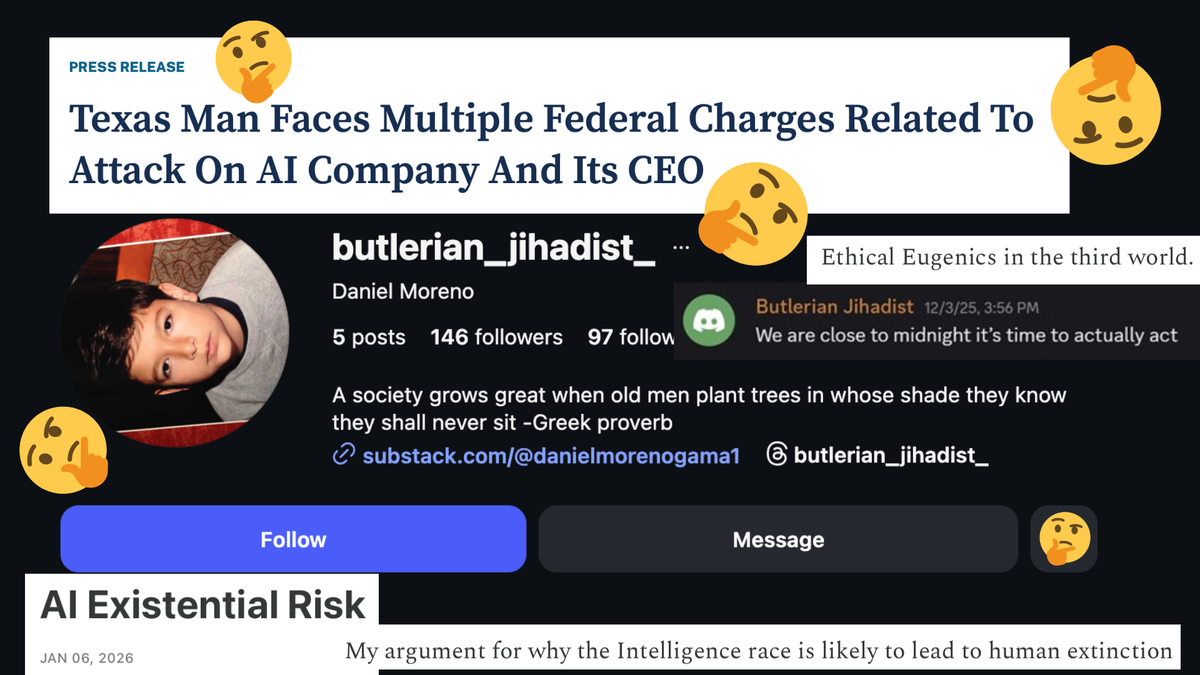

Police have arrested Daniel Alejandro Moreno-Gama in connection with the Molotov cocktail attack on Altman's home. According to police reports, he also traveled to OpenAI's headquarters with the stated intention to "burn the building down and kill anyone inside".

Like many AI risk believers, he had a blog on Substack where he discusses his opinions on the topic. Moreno-Gama's views fall firmly in the pessimistic camp - he believes that AI development is "likely to lead to human extinction" and blames Sam Altman personally for contributing to the race to create artificial general intelligence (AGI). The essay ends in a call for action, declaring that "there is nothing more pathetic than letting evil occur and doing nothing to stop it". In another post called "A Eulogy For Man", he claims that "all living and artificially living organisms have shown to be selfish".

This alarming rhetoric has been used by many believers of superintelligence risk. OpenAI itself has used similarly apocalyptic language in a 2023 blog post which declares that "superintelligence will be the most impactful technology humanity has ever invented" while warning that it could lead to "human extinction".

OpenAI's doomer critics agree with this framing, but believe that the strong profit motives around AGI mean any safety efforts are likely to fail and favor stopping research entirely. For example, Guido Reichstadter, co-founder of the Stop AIactivist group, claims that he supports "all efforts to stop (AGI) by any means necessary". Stop AI has an official policy of non-violence, but this is tested by the apocalyptic nature of their beliefs, a protest by Stop AI at OpenAI's headquarters led to violence from Sam Kirchner, the group's other co-founder. Stop AI's official chat has a one-strike rule against violent ideation, indicating that such rhetoric is an ongoing issue for the group

In July 2025, Reichstardter recently argued that "we’ve got to stop being afraid of the repercussions of actually stopping them whether that means discomfort, jail, prison or anything else". Two days later, he went even further, calling for people to physically force AI company CEOs like Sam Altman to stop working on AGI and even appeared to call for violent revolution against governments that don't "upholding (their) responsibility to protect your life by stopping AI development". Stop AI shares goals and protest tactics with the PauseAI movement, which Daniel Moreno-Gama is a follower of.

Thankfully, fellow AI extremism researcher Dr. Nirit Weiss-Blatt has documented Moreno-Gama's posts in the PauseAI Discord server. In his introduction, written at age 18 in 2024, he states that he is "willing to learn and help whatever means necessary". In December 2025, he posted "We are close to midnight it's time to actually act".

Moreno-Gama's username on Discord matches his Instagram: "Butlerian Jihadist". This is a reference to the "Butlerian Jihad", a war against thinking machines that takes place in Frank Herbert's Dune series of books.

Like many AI risk believers, Daniel Moreno-Gama also believes in race science. In a February Substack post titled "An analysis of the political extremes and their resolutions" , he claimed that "East Asian people are on average more intelligent than Black people".

Moreno-Gama doesn't completely buy into traditional White Nationalism. He presents a situation in which the world follows the logic of a hypothetical fascist named "Charlie" and a global White Nationalist hegemony is achieved. Following this, Moreno-Gama claims that the White Nationalists would begin going after each other before "genetically modified children who age 5 times quicker than normal children, and who have superhuman abilities start rolling around", " biology is recognized as a weakness", and "all organic life is exterminated and replaced by mechanical beings, which are then replaced by higher dimensional consciousnesses". He elaborates on his issues with Naziism, claiming that "Nazi Germany may have ushered in Utopia for a few short years, but then the purity testing and purging would’ve continued". Despite not being white himself, Moreno-Gama declares that "whiteness in these countries is a decent correlative to some of the things I value" and advocates for "Ethical Eugenics in the third world" in which we "increase IQ naturally over time by selecting the smartest children".

Ironically, Daniel Moreno-Gama's intended target also has his own spotty history with race. A 2023 NY Magazine articleabout Sam Altman cited a Black entrepreneur who claims he was treated with "clear and lasting disregard" by Altman and implies that he's racist. Altman also declined to confront popular tech podcaster Lex Fridman when he brought up race science as an example of a "harmful truth" during an interview.

This obsession with intelligence isn't by accident, In order to conceptualize a future in which ASI exists, AGI believers rely on an assuming that AI capabilities are and will continue to increase. This necessitates a way of quantifying the intelligence of AI models and in turn, quantifying the intelligence of humans so that it can be determined when a model reaches "human-level". From the perspective of AI researchers (many of whom lack a background in the humanities), IQ and related testing frameworks are a easy way of measuring intelligence. By projecting this intelligence exponentially into the future, believers can then make projections of when ASI will be achieved - a process that strongly resembles Christian attempts a predicting the Rapture and Second Coming of Jesus Christ with "superintelligence" taking the place of God.